Designing a personalized ranking system for more than 2 billion people (all with different interests) and a plethora of content to select from presents significant, complex challenges. This is something we tackle every day with News Feed ranking. Without machine learning (ML), people’s News Feeds could be flooded with content they don’t find as relevant or interesting, including overly promotional content or content from acquaintances who post frequently, which can bury the content from the people they’re closest to. Ranking exists to help solve these problems, but how can you build a system that presents so many different types of content in a way that’s personally relevant to billions of people around the world? We use ML to predict which content will matter most to each person to support a more engaging and positive experience. Models for meaningful interactions and quality content are powered by state-of-the-art ML, such as multitask learning on neural networks, embeddings, and offline learning systems. We are sharing new details of how we designed an ML-powered News Feed ranking system.

Building a ranking algorithm

To understand how this works, let’s start with a hypothetical person logging in to Facebook: We’ll call him Juan. Since Juan’s login yesterday, his good friend Wei posted a photo of his cocker spaniel. Another friend, Saanvi, posted a video from her morning run. And his favorite Page published an interesting article about the best way to view the Milky Way at night, while his favorite cooking Group posted four new sourdough recipes.

Because Juan is connected to or has chosen to follow the producers of this content, it’s all likely to be relevant or interesting to him. To rank some of these things higher than others in Juan’s News Feed, we need to learn what matters most to Juan and which content carries the highest value for him. In mathematical terms, we need to define an objective function for Juan and perform a single-objective optimization.

Take Saanvi’s running video, for example. On Facebook, one concrete observable signal that an item has value for someone is if they click the like button. Given various attributes we know about a post (who is tagged in a photo, when it was posted, etc.), we can use the characteristics of the post Xit toward viewer j at time t, and predict Yijt (whether Juan might like the post). Mathematically, for each post i, we estimate Yijt = f(xijt1;xijt2; … xijtC), where c represents a characteristic c (1..C) such as the type of post or the relationship between the viewer and the author of the post (e.g., whether they marked each other as family members) and the function f(.) combines the attributes into a single value.

For example, if Juan tends to interact with Saanvi a lot or share the content Saanvi posts, and the running video is very recent (e.g., from this morning), we might see a high probability that Juan likes content like this. On the other hand, perhaps Juan has previously engaged more with video content than photos, so the like prediction for Wei’s cocker spaniel photo might be lower. In this case, our ranking algorithm would rank Saanvi’s running video higher than Wei’s cocker spaniel photo because it predicts a higher probability that Juan will like that piece of content.

But is liking the only way Juan expresses his preferences? Surely not. He might share articles he finds interesting, watch videos from his favorite game streamers, or leave thoughtful comments on posts from friends. Things get more mathematically complicated when we need to optimize for multiple objectives that all contribute to our overarching objective (creating the most long-term value for people). You can have multiple values (Yijtk), e.g., likes, comments, and shares, each for a different value of k, that all need to somehow aggregate up to a single Vijt value. To complicate things further, for each person on Facebook there are thousands of signals we need to evaluate to determine what that person might find most relevant, so the algorithm gets very complex in practice.

How do you pick the overarching value for an ecosystem the size of Facebook? We want to provide the people using our services with long-term value. How much does seeing this friend’s running video or reading an interesting article create value for Juan? We think the best way to assess whether something is creating long-term value for someone is to pick metrics that are aligned with what people say is important to them. So we survey people about how meaningful they found an interaction with their friends or whether a post is worth their time to make sure our values (Yijtk) reflect what people say they find meaningful.

Multiple prediction models provide us with multiple predictions for Juan: a probability he’d engage with (e.g., like or leave a comment on) Wei’s cocker spaniel picture, Saanvi’s running video, the article shared on the Page, and the cooking Group posts. Each of these models will try to rank each of these pieces of content for Juan. Sometimes the models disagree (e.g., Juan might like Saanvi’s running video with a higher probability than the Page article, but he might be more likely to share the article than Saanvi’s video), and the way we take each prediction into account for Juan is based on the actions that people tell us (via surveys) are more meaningful and worth their time.

Approximating the ideal ranking function in a scalable ranking system

Now that we know the theory behind ranking (as exemplified through Juan’s News Feed), we need to determine how to build a system for this optimization. We need to score all the posts available for more than 2 billion people (more than 1,000 posts per user, per day, on average), which is challenging. And we need to do this in real time — so we need to know if an article has received a lot of likes, even if it was just posted minutes ago. We also need to know if Juan liked a lot of other content a minute ago, so we can use this information optimally in ranking.

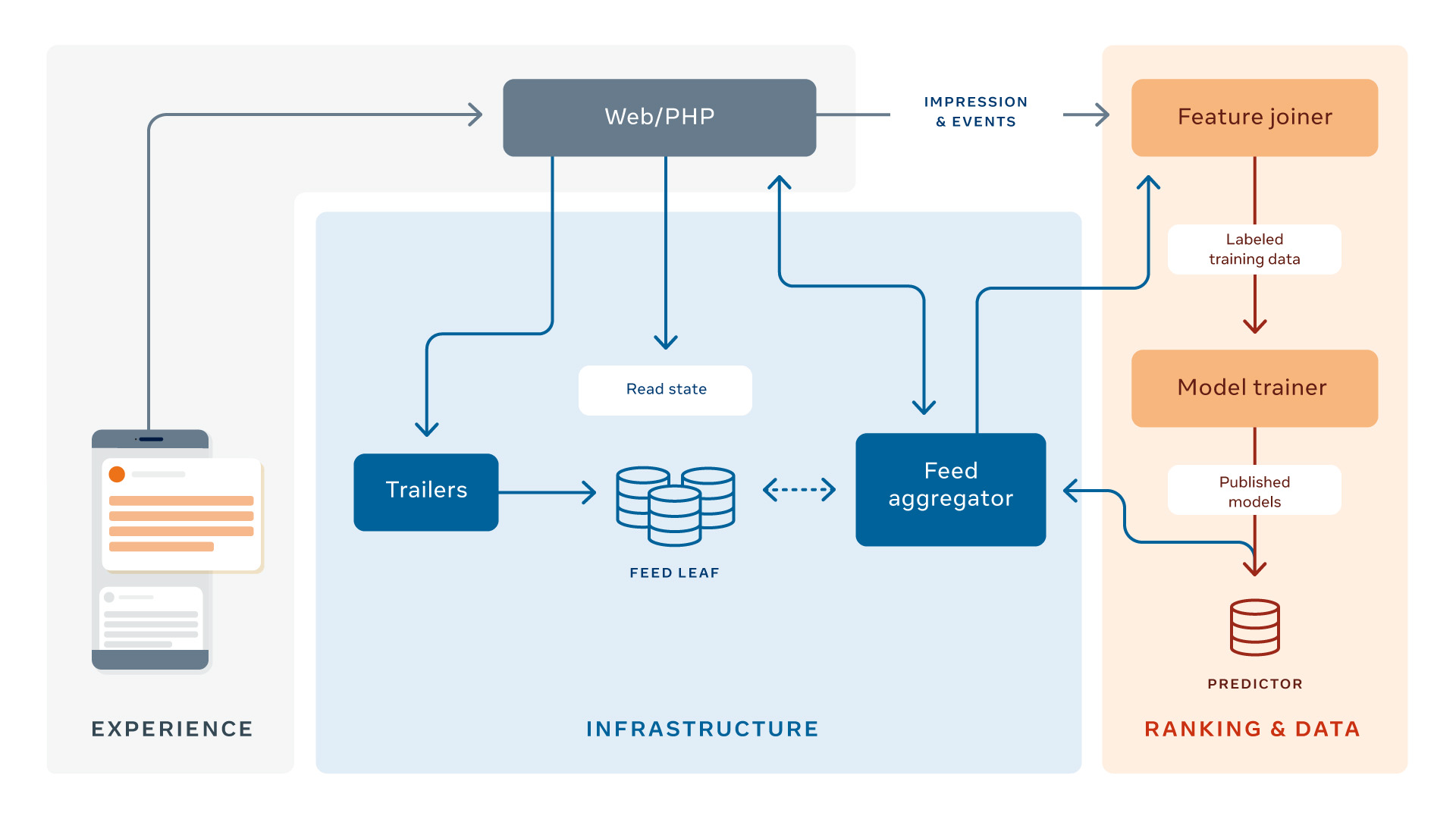

Our system architecture uses a Web/PHP layer, which queries the feed aggregator. The role of the feed aggregator is to collect all relevant information about a post and analyze all the features (e.g., how many people have liked this post before) in order to predict the post’s value Yijt to the user, as well as the final ranking score Vijt by aggregating all the predictions.

Now let’s review how the aggregator works:

- Query inventory. We first need to collect all the candidate posts we can possibly rank for Juan (the cocker spaniel picture, the running video, etc.). The first part is fairly straightforward: The eligible inventory includes any non-deleted post shared with Juan by a friend, Group, or Page that he is connected to that was made since his last login. But what about posts created before Juan’s last login that he hasn’t seen yet? Maybe these were higher quality or more relevant than the newer posts, but he simply didn’t have time to look at them. To make sure unseen posts are also reconsidered, we have an unread bumping logic: Fresh posts that Juan has not yet seen but that were ranked for him in his previous sessions are eligible again for him to see. We also have an action-bumping logic: If any posts Juan has already seen have triggered an interesting conversation among his friends, Juan may be eligible to see this post again as a comment-bumped post.

- Score Xit for Juan for each prediction (Yijt). Now that we have Juan’s inventory, we score each post using multitask neural nets. There are many, many features (xijtc) we can use to predict Yijt, including the type of post, embeddings (i.e., feature representations generated by deep learning models), and what the viewer tends to interact with. To calculate this for more than 1,000 posts, for each of billions of users — all in real time — we run these models for all candidate stories in parallel on multiple machines, called predictors.

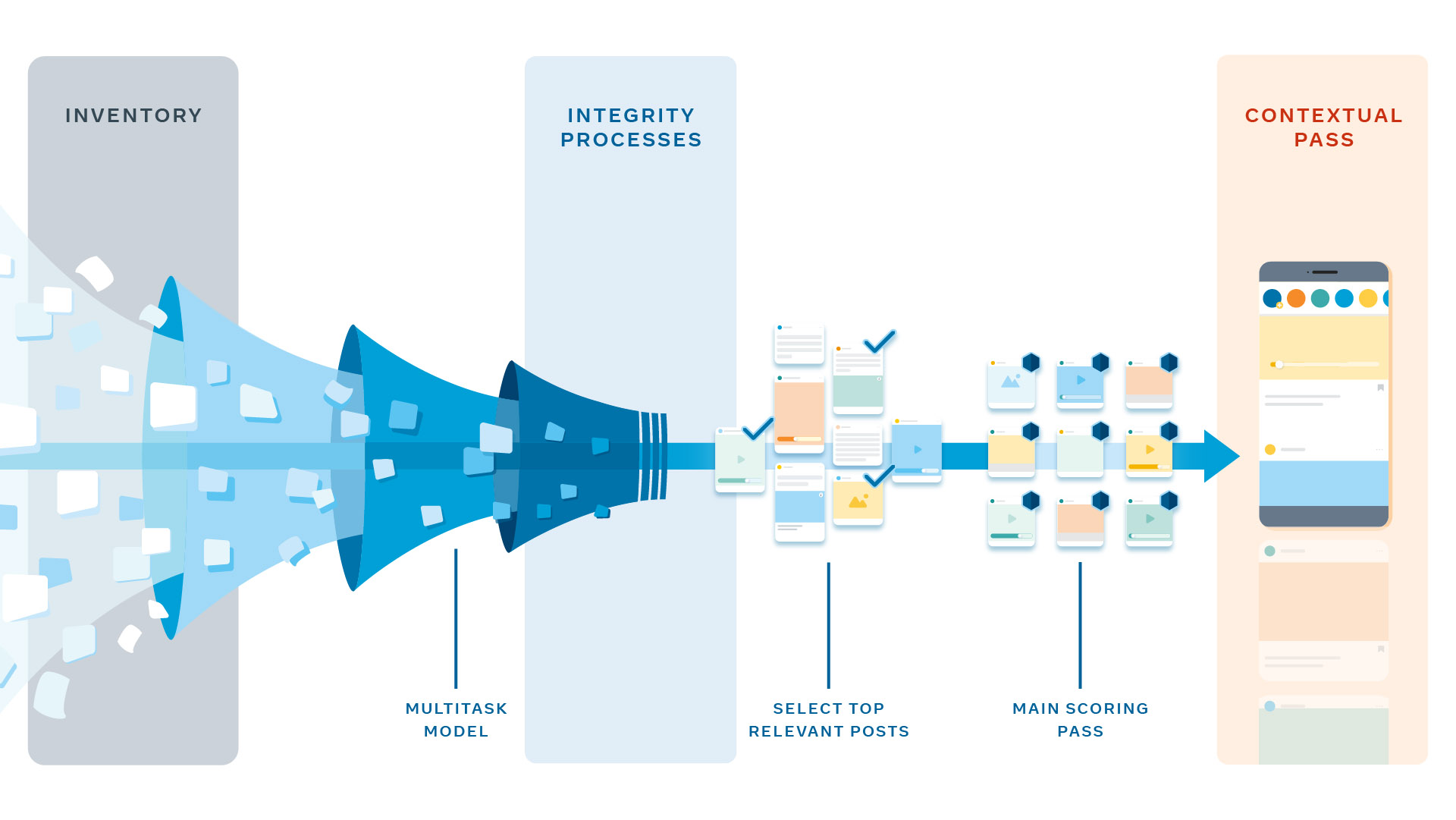

- Calculate a single score out of many predictions: Vijt. Now that we have all the predictions, we can combine them into a single score. To do this, multiple passes are needed to save computational power and to apply rules, such as content type diversity (i.e., content type should be varied so that viewers don’t see redundant content types, such as multiple videos, one after another), that depend on an initial ranking score. First, certain integrity processes are applied to every post. These are designed to determine which integrity detection measures, if any, need to be applied to the stories selected for ranking. Then, in pass 0, a lightweight model is run to select approximately 500 of the most relevant posts for Juan that are eligible for ranking. This helps us rank fewer stories with high recall in later passes so that we can use more powerful neural network models. Pass 1 is the main scoring pass, where each story is scored independently and then all ~500 eligible posts are ordered by score. Finally, we have pass 2, which is the contextual pass. Here, contextual features, such as content-type diversity rules, are added to help diversify Juan’s News Feed.

- A deeper look at pass 1: Most of the personalization happens in pass 1. We want to optimize how we combine Yijtkinto Vijt. For some, the score may be higher for likes than for commenting, as some people like to express themselves more through liking than commenting. For simplicity and tractability, we score our predictions together in a linear way, so that Vijt = wijt1Yijt1 + wijt2Yijt2 + … + wijtkYijtk. Note that this linear formulation has an advantage: Any action a person rarely engages in (for instance, a like prediction that’s very close to 0) automatically gets a minimal role in ranking, as Yijtk for that event is very low. To personalize beyond this dimension, we continue researching personalization based on observational data. People with higher correlation gain more value from that specific event, as long as we make this method incremental and control for potential confounding variables.

Once we’ve completed these ranking steps, we have a scored News Feed for Juan (and all the people using Facebook) in real time, ready for him to consume and enjoy.

Now that you understand the science, ranking architecture, and engineering behind News Feed more, you can see how our ranking algorithm helps create a valuable experience for people at previously unimaginable scale and speed. Juan benefits by seeing more personally meaningful and interesting content when he comes to Facebook, and so do billions of other people. We are constantly improving our ranking system by iterating on our prediction models, enhancing personalization, and more to help people find the content that creates value and helps them stay connected to friends and family.