- At Meta, we’ve been diligently working to incorporate privacy into different systems of our software stack over the past few years. Today, we’re excited to share some cutting-edge technologies that are part of our Privacy Aware Infrastructure (PAI) initiative. These innovations mark a major milestone in our ongoing commitment to honoring user privacy.

- PAI offers efficient and reliable first-class privacy constructs embedded in Meta infrastructure to address complex privacy issues. For example, we built Policy Zones that apply across our infrastructure to address restrictions on data, such as using it only for allowed purposes, providing strong guarantees for limiting the purposes of its processing.

- As we expanded PAI across Meta, increasing its maturity, we gained valuable insights. Our understanding of the technology evolved, revealing the need for a larger investment than initially planned to create a cohesive ecosystem of libraries, tool suites, integrations, and more. These investments have been crucial in enforcing complex purpose limitation scenarios while ensuring scalability, reliability, and a streamlined developer experience.

Purpose limitation, a core data protection principle, is about ensuring data is only processed for explicitly stated purposes. A crucial aspect of purpose limitation is managing data as it flows across systems and services. Commonly, purpose limitation can rely on “point checking” controls at the point of data processing. This approach involves using simple if statements in code (“code assets”) or access control mechanisms for datasets (“data assets”) in data systems. However, this approach can be fragile as it requires frequent and exhaustive code audits to ensure the continuous validity of these controls, especially as the codebase evolves. Additionally, access control mechanisms manage permissions for different datasets to reflect various purposes using mechanisms like access control lists (ACLs), which requires the physical separation of data into distinct assets to ensure each maintains a single purpose. When Meta started to address more and larger-scope purpose limitation requirements that crossed dozens of our systems, these point checking controls did not scale.

At Meta, millions of data assets are crucial for powering our product ecosystem, optimizing machine learning models for personalized experiences, and ensuring our products are high quality and meet user expectations. Identifying which code branches and data assets require protection is challenging due to complex propagation requirements and permissions models that need constant revision. For example, when a data consumer reads from one data asset (“source”) and stores the output in another (“sink”), point checking controls would require complex orchestration to ensure propagation from sources to sinks, which can become operationally unviable.

To address this problem, point checking controls can be enhanced by leveraging data flow signals. Data flows can be tracked from the same origin, where relevant data is collected, using various techniques such as static code analysis, logging, and post-query processing. This creates a graph, known as “data lineage,” that tracks the relationships between source and sink data assets. By utilizing data lineage, permissions can be applied to relevant data assets based on these source-to-sink relationships. The combination of point checking and data lineage, while viable at a small scale, leads to significant operational overhead as point checking still requires auditing many individual assets.

Building on these insights, in our latest iteration, we found that the information flow control (IFC) model offers a more durable and sustainable approach by controlling not only data access but also how data is processed and transferred in real-time, rather than relying on point checking or out-of-band audits. Thus, we developed Policy Zones as our IFC-based technology and integrated it across major Meta systems to enhance our purpose limitation capabilities at scale. This effort was later expanded into the Privacy Aware Infrastructure (PAI) initiative, a transformative investment that integrates first-class privacy support into Meta’s infrastructure systems.

We believe PAI is the right investment to protect people’s privacy at scale and can effectively enforce purpose limitation requirements.

Why invest in Policy Zones?

Through our experience deploying purpose limitation solutions over the years, we identified several key themes:

| Needs | Problem | Solution |

| Programmatic Control: We needed to rely more on programmatic controls instead of point checking human audits to control data flows, and do so in real-time | Traditional point checking controls, combined with data lineage checks, can detect data transfers within a specific time frame but not in real-time. Addressing these risks requires implementing resource-intensive human audits at access points. | In contrast, PAI is designed to check data flows in real-time during code execution, blocking problematic data flows from occurring, facilitated by UX tooling, thus making it more scalable. |

| Granular Flow Control: We needed to maximize the reuse of existing data and business logic on complex infra | Access control is easy to roll out when data is separated physically, but poses significant costs, complexity, and limitations when dealing with Meta’s complex infrastructure, where data for different purposes is often processed by shared code. | PAI solves this by providing precise decision making at the granular level of individual requests, function calls, or data elements, achieving logical data separation at a relatively low compute cost even on complex infrastructures where it’s needed. |

| Adaptable and Extensible Control: We needed to handle ever-evolving requirements, even multiple for the same data assets | We are facing a rapidly changing world for privacy. Data use restrictions can vary over time depending on evolving privacy and product requirements. A single data asset or different parts of it might be subject to multiple privacy requirements. While “point checking” can address this to some extent, it struggles to control downstream data flows, even combined with data lineage. | PAI is designed to check multiple requirements involved in data flows and is highly flexible to adapt to changing requirements. |

How Policy Zones works

Let’s dive into what Policy Zones is and how we can leverage it to meet purpose limitation requirements. Policy Zones provides a comprehensive mechanism for encapsulating, evaluating, and propagating privacy constraints for data both “in transit” and “at rest,” including transitions between different systems. It conducts runtime evaluation of constraints, context propagation, and is deeply integrated with numerous data and code frameworks (e.g., HHVM, Presto, and Spark), representing a step change in how we approach information flow control.

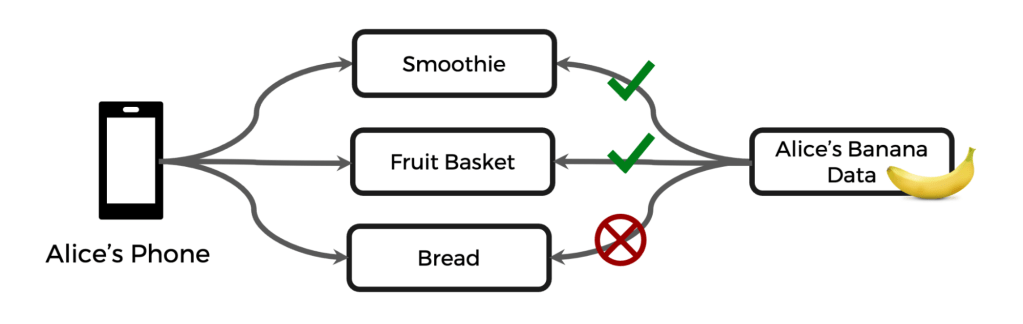

To make the explanation more relatable and bring some levity to a serious topic, we’ll use a simple example: Let’s say a new requirement comes up, where banana data can only be used for the purposes of making smoothies and fruit baskets, but not for making banana bread. For simplicity, this example and the illustration below only demonstrate the first row of the above table.

How would developers leverage Policy Zones to implement such a requirement?

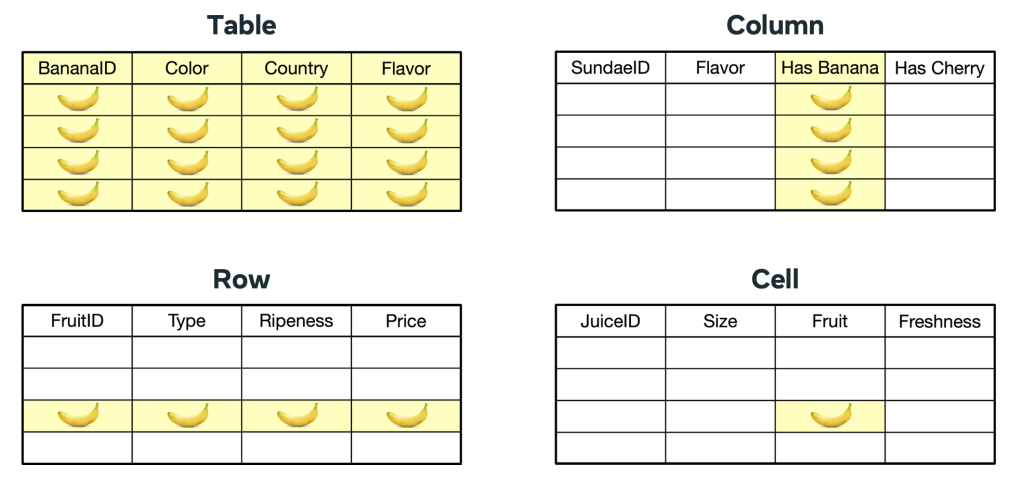

First, to demarcate relevant data assets, they assign a metadata label (“data annotation,” e.g., BANANA_DATA) to data assets at different granularities. This annotation is associated with the purpose limitation requirement as a set of data flow rules that enable systems to understand the allowed purposes for the data.

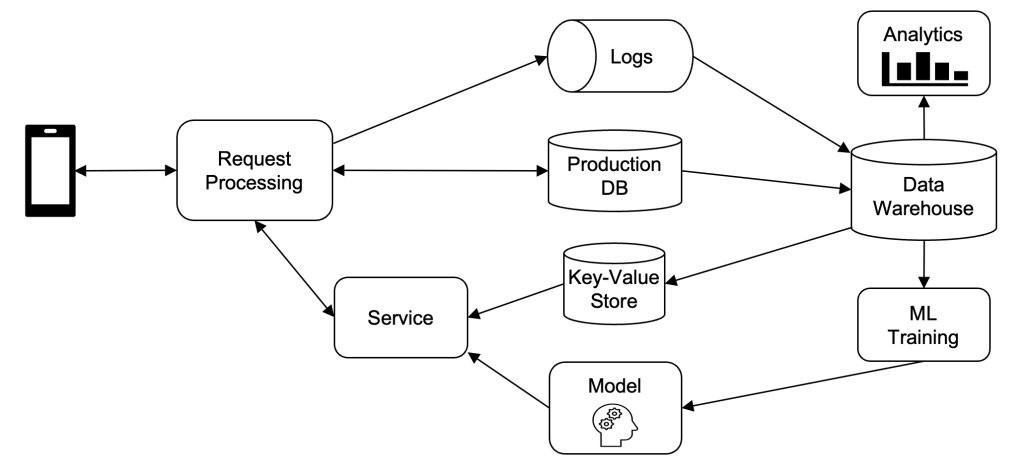

When annotated data is processed, Policy Zones kicks in and checks whether the data processing is allowed and data can flow downstream. Policy Zones has been built into different Meta systems, including:

- Function-based systems that load, process, and propagate data through stacks of function calls in different programming languages. Examples include web frontend, middle-tier, and backend services.

- Batch-processing systems that process data rows in batch (mainly via SQL). Examples include real-time and data warehouse systems that power Meta’s AI and analytics workloads.

Let’s dive deeper into how Policy Zones works for the function-based systems, while the same logic applies to the batch-processing systems as well.

In function-based systems, data is passed through parameters, variables, or return values in a stack of function calls.

Let’s walk through an example:

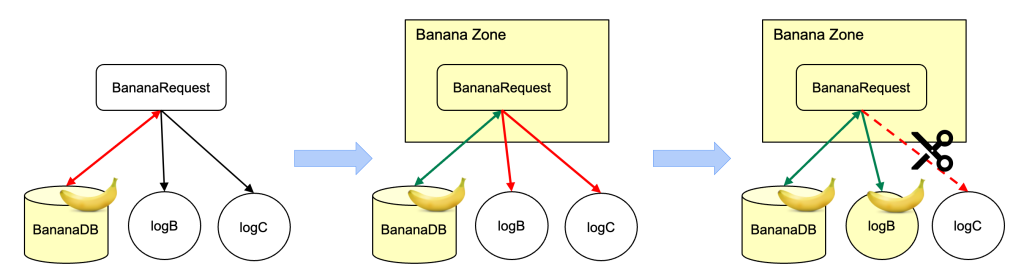

- A web request, “BananaRequest,” loads annotated data from BananaDB, causing a data flow violation because the intent of the caller is unknown.

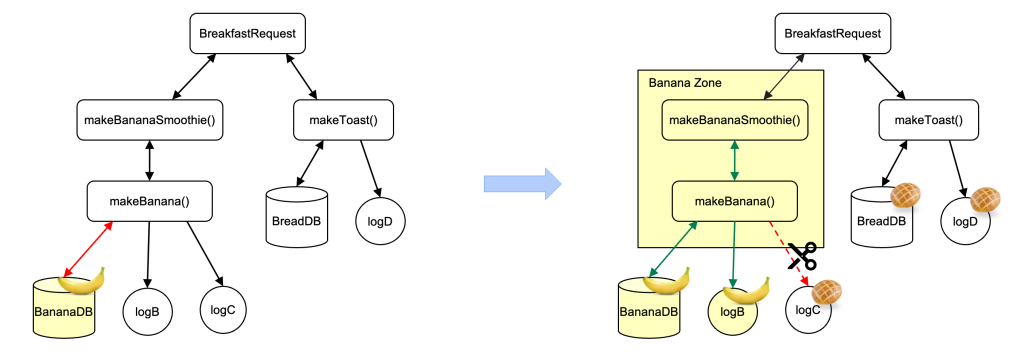

- To remediate the data flow violation, we annotate BananaRequest with the BANANA_DATA label, creating a zone (“Banana Zone”) for the request.

- Behind the scenes at runtime, Policy Zones programmatically checks all data flows against the flow rules based on the context, flagging new data flow violations from BananaRequest to logB and logC.

- We annotate logB as banana and remove the logging of banana data into logC to cut off the disallowed data flow.

- With all data flow violations remediated, the zone can be moved from logging mode to enforcement. If a developer adds a write to a sink outside of the zone, it will be blocked automatically.

In a more complex scenario, a function, “makeBananaSmoothie()” from a web request, “BreakfastRequest” calls another function, “makeBanana().” Besides the previous data flow violations, we need to remediate another data flow violation: makeBanana() returns banana data to makeBananaSmoothie(). This means we can create a “Banana Zone” from the function makeBananaSmoothie() that includes all functions that it calls directly or indirectly.

In batch-processing systems, data is processed in batches for rows from tables that are annotated as containing relevant data. When a job runs a query (usually SQL-based) to process the data, a zone is created and Policy Zones flags any data flow violations. Remediation options are provided, similar to those for function-based systems. Once all violations have been remediated, the zone can be moved from logging mode to enforcement mode to prevent future data flow violations. Data annotation can be done at various levels of granularity, including table, column, row, or potentially even cell.

When data flows across different systems (e.g., from frontend, to data warehouse, then to AI), Policy Zones ensures that relevant data is annotated correctly and thus continues to be protected according to the requirements. For some systems that don’t have Policy Zones integrated yet, the point checking control is still used to protect the data.

How we applied PAI to existing systems at scale

The above gives you a glimpse into how the technology is used to roll out a simple use case. However, adopting Policy Zones is a non-trivial task for complex requirements across tens or hundreds of systems. The requirement owner usually collaborates with other engineers who are code and data asset owners across Meta to implement different aspects of that requirement. In some cases, this may involve hundreds or thousands of engineers to complete the implementation and audits. To address this challenge, PAI offers Policy Zone Manager (PZM), a suite of UX tools that helps requirement owners to efficiently enforce privacy requirements using PAI.

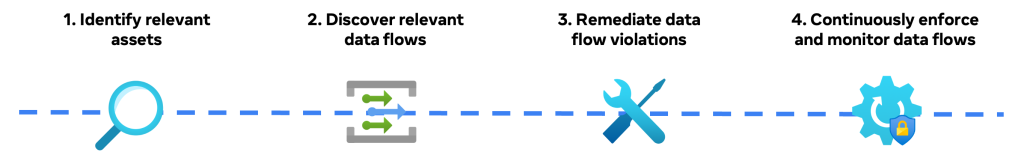

Let’s take a look at how PZM makes it easy for people to satisfy their purpose limitation needs in existing systems, using the above banana requirement as an example. At a high level, the requirement owner carries out the following workflow, facilitated by PZM:

- Identify relevant assets: This is to identify which source assets need to be purpose limited for the given requirement.

- Discover relevant data flows: This is to discover the downstream data flows from the source assets in order to integrate Policy Zones at scale.

- Remediate data flow violations: This is to allow people to choose which option to take to remediate data flow violations.

- Continuously enforce and monitor data flows: This is to turn on Policy Zones enforcement and monitor it to prevent new data flow violations.

To hear more about this process, check out our presentation at the PEPR conference in June 2024.

Step 1 – Identify relevant assets

For a given requirement, we check the relevant product entry points (e.g., mobile apps, web requests, and databases) to pinpoint data assets that are collected. These assets may take the form of request parameters, database entries, or event log entries. We use data structures to represent (“schematize”) these data assets and fields, capturing relevant data at various granularities. In the running example, a table in the banana database might contain entirely banana data, a single banana column, or a mix of banana and other fruit data.

In addition to manual code inspection, we heavily rely on various techniques such as our scalable ML-based classifier to automatically identify data assets.

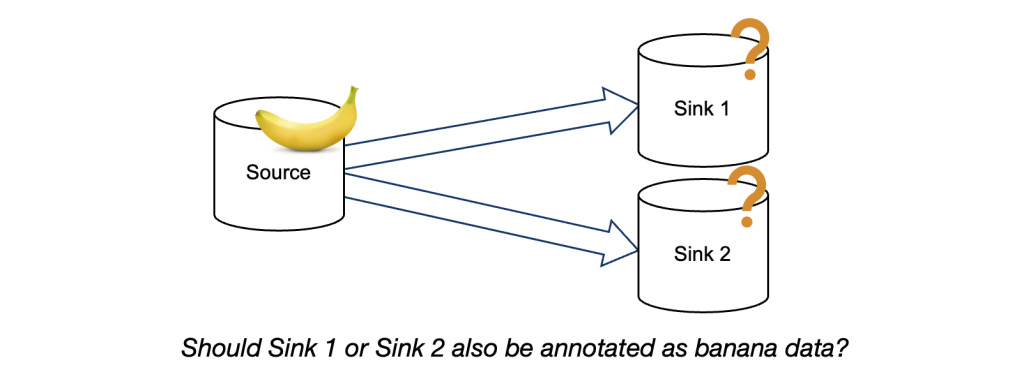

Step 2 – Discover relevant data flows

From a given annotated source, the requirement owner can identify its downstream data flows and sinks (see diagram below). The owner can then decide how to handle these data flows. However, this process can be time consuming when there are many data flows that are one or multiple hops away from the same origin. This often occurs when implementing a new requirement over existing data flows.

Although data lineage presents significant operational overhead for point checking mechanisms, it can efficiently identify where to integrate Policy Zones into the codebase. Therefore, we have integrated data lineage into PZM, allowing requirement owners to discover multiple downstream assets from a given source simultaneously. Once the requirement has been fully implemented, we can rely solely on Policy Zones to enforce the requirements.

Step 3 – Remediate data flow violations

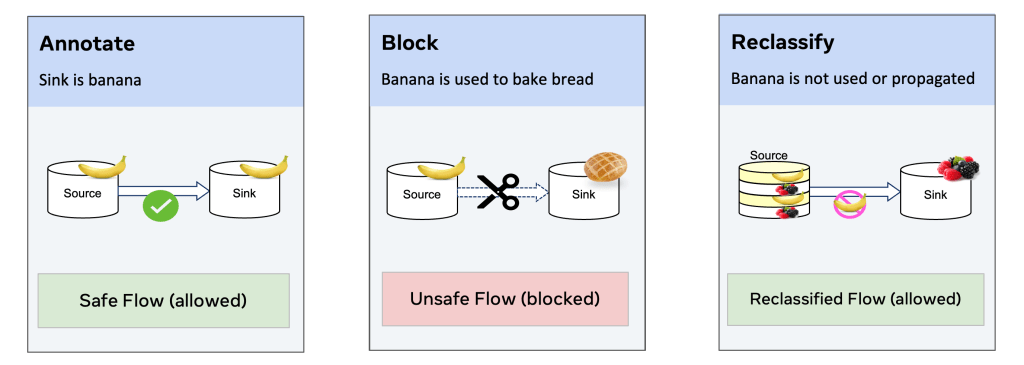

By default, the data flow from a source asset to a sink must meet all of the requirements of the source. If not, it’s considered a data flow violation and needs remediation, enforced by Policy Zones programmatically at runtime. There are three main cases to remediate data flow violations (using the running example to help concretize the general cases):

- Case 1: Safe flow – relevant data is used for allowed purpose(s): Assign the banana annotation to the sink asset.

- Case 2: Unsafe flow – relevant data is used for disallowed purpose(s): Block data access and code execution to prevent further processing of banana data.

- Case 3: Reclassified flow – relevant data is not used or propagated: Annotate the data flow as reclassified as being permitted. Banana data from the source is not used or propagated to the sink.

Step 4 – Continuously enforce and monitor data flows

PAI is integrated into our major data systems to check data flows and catch violations at runtime. During the initial rollout of a new requirement, Policy Zones can be configured to allow remediations of flow violations in “logging mode.” Once Policy Zones enforcement is enabled, any data flow with unremediated violations is denied. This also prevents new data flow violations, even if code changes or new code is added.

PAI continuously monitors the enforcement of requirements to ensure that it operates correctly. PZM provides a set of verifiers to check the accuracy of asset annotations and control configurations.

Lessons learned from adoption at scale across Meta

As PAI has been adopted by a multitude of purpose limitation requirements across Meta, we’ve learned several key lessons over the past few years:

Focus on solving one specific end-to-end use case first

Initially, we developed Policy Zones for batch-processing systems with some basic use cases. However, we realized that our designs for function-based systems were quite abstract and the adoption for a large-scale use case resulted in significant challenges, consequently, requiring considerable effort to map patterns to customer needs. Furthermore, refining the APIs and building missing operational support made it work effectively end-to-end across multiple systems. Only after addressing these challenges were we able to make it more generic and proceed with integrating Policy Zones across extensive platforms.

Streamline integration complexity

Integrating PAI into major Meta systems coherently was a complex, lengthy, and challenging process. We encountered significant difficulties in integrating PAI with Meta’s diverse systems broadly. It took us years to overcome these challenges. For example, initially, product teams expended considerable effort to schematize data assets across different data systems. Then we developed reliable, computationally efficient, and widely applicable PAI libraries in various programming languages (Hack, C++, Python, etc.) that enabled a smoother integration with a broad range of Meta’s systems.

Invest in computational and developer efficiency early on

We also undertook multiple iterations to simplify PAI and improve its computational efficiency. Our initial annotation APIs were overly complex, resulting in high cognitive overhead for engineers. Furthermore, the computational overhead of data flow checking was prohibitively high in Meta’s high-throughput systems. Through several rounds of refinement, we simplified policy lattice representation and evaluation, built language-level features to natively propagate Policy Zones context, and canonicalized policy annotation structures, achieving 10x improvements in computational efficiency.

Simplified and independent annotations are a must to scale to a wide range of requirements

Initially, we employed a monolithic annotation API to model intricate data flow rules and annotate relevant code and data. However, as data from multiple requirements were combined, propagating these annotations from sources to sinks became increasingly complex, resulting in data annotation conflicts that were difficult to resolve. To address this challenge, we implemented simplified data annotations to decouple data from requirements and separate data flow rules for different requirements. This significantly streamlined the annotation process, ultimately improving developer experiences.

Build tools; they are required

We have made significant efforts to ensure the use of PAI is easy and efficient, ultimately improving the developer experience. Initially, we focused on the correctness of the technology first before investing in tooling. Adopting Policy Zones required a lot of manual effort, and it was challenging for engineers to understand how to properly annotate their assets, which led to additional cleanup work later. To address this issue, we developed the PZM tool family, which includes built-in automated rules and classifiers. These tools guide teams through standard workflows, ensuring safe and efficient rollout of purpose limitation requirements and reducing engineering efforts by orders of magnitude.

Durable privacy protection for everyone

Meta is committed to protecting user privacy. The PAI initiative is a crucial step in safeguarding data and preserving privacy efficiently and reliably. It provides a robust foundation for Meta to sustainably tackle privacy challenges, meet high reliability standards, and address future privacy issues more efficiently than traditional solutions. While we’ve laid a strong groundwork, our journey is just beginning. We aim to build upon this foundation by expanding our capabilities and controls to accommodate a wider range of privacy requirements, enhancing the developer experience, and exploring new frontiers.

We hope our work sparks innovation and fosters collaboration across the industry in the field of privacy.

Acknowledgements

The authors would like to acknowledge the contributions of many current and former Meta employees who have played a crucial role in productionizing and adopting PAI over the years. In particular, we would like to extend special thanks to (in alphabetical order) Adrian Zgorzalek, Alex Gorelik, Amritha Raghunath, Anuja Jaiswal, Brian Sniffen, Brian Romanko, Brian Spanton, Daniel Ramagem,David Detlefs, David Mortenson, David Taieb, Gabriela Jacques da Silva, Ian Carmichael, Itai Gal, Iuliu Rus, Jafar Husain, Jerry Pan, Jiang Wu, Joel Krebs, Jun Fang, Komal Mangtani, Marc Celani, Mark Konetchy, Matthieu Martin, Michael Levin, Nirman Gupta, Oliver Dodd, Parthiv Patel, Perry Stoll, Peter Prelich, Pieter Viljoen, Prashant Dhamdhere, Rajesh Nishtala, Rajkishan Gunasekaran, Ramnath Krishna Prasad, Rishab Mangla, Sergey Doroshenko, Seth Silverman, Sriguru Chakravarthi, Sushaant Mujoo, Tarek Sheasha, Thomas Georgiou, Uday Ramesh Savagaonkar, Vitalii Tsybulnyk, Vlad Fedorov, Wolfram Schulte, and Yi Huang. We would also like to express our gratitude to all reviewers of this post, including (in alphabetical order) Aleksandar Ilic, Benjamin Renard, Emil Vazquez, Emile Litvak, Harrison Fisk, Jason Hendrickson, Jessica Retka, Nimish Shah, Sabrina B Ross, and Sam Blatchford. We would like to especially thank Emily DiPietro for championing the idea, leading the editorial effort, and pulling all required support together to make this blog post happen.