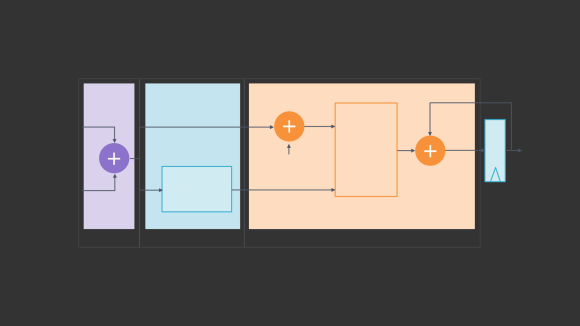

Making floating point math highly efficient for AI hardware

In recent years, compute-intensive artificial intelligence tasks have prompted creation of a wide variety of custom hardware to run these powerful new systems efficiently. Deep learning models, such as the ResNet-50 convolutional neural network, are trained using floating point arithmetic. But because floating point has been extremely resource-intensive, AI deployment systems typically rely upon one … Continue reading Making floating point math highly efficient for AI hardware […]