- New 360 audio encoding and rendering technology makes maintaining high-quality spatial audio throughout the pipeline — from editor to user — possible for large-scale consumption for the first time.

- The sound quality remains high because we support what we describe as “hybrid higher-order ambisonics,” an 8-channel system with rendering optimizations to incorporate the quality of higher-order ambisonics with fewer channels, ultimately saving on bandwidth.

- Our audio system supports spatial audio and head-locked audio simultaneously. In spatialized audio the system reacts to which way a person’s head is turned in the 360 experience when hearing sounds coming from the scene. With head-locked audio, things like narration and music stay static. Rendering with hybrid higher-order ambisonics and head-locked audio simultaneously is a first for the industry.

- The spatial audio renderer supports real-time experiences with less than half a millisecond of latency.

- The FB360 Encoder tool exports to multiple platforms. The Rendering SDK is integrated across Facebook and Oculus Video, which guarantees a unified experience from production to publication. That saves time and ensures that what you hear in production is what you publish.

360 video experiences on Facebook are amazing and immersive. Though to get the full experience, you might want 360 audio too. With 360 audio, the audio sounds like it’s coming from a certain direction in space, like it would in real life. A helicopter flying above the camera should sound like it is above you, and the actor behind the camera should sound like he or she is behind you. As you look around the video, the sounds need to react and reposition themselves to where the objects are on the screen. Every time you look around in a 360 video, whether you are watching on a phone, browser, or VR headset, the audio needs to be recalculated and repositioned to sound believable.

In its simplest terms, to make that work, we had to develop a system that could go beyond the immersion that you feel in a movie theater, but do it without the benefit of a giant room in which to place speakers. In the problem we were trying to solve, we had to create a soundscape that mirrors a real-world environment, in your headphones, at a much higher resolution, and then keep track of which way you’re looking. The regular stereo audio you hear over headphones might help you understand whether a sound is playing in your left ear or right ear, but it doesn’t help you perceive depth or height or understand whether a sound is playing in front of you or behind you.

Creating such spatial audio experiences and playing them back at scale requires new technologies. While research on spatial audio is being conducted in academic arenas, until now there has been no reliable, end-to-end pipeline for bringing such technology at scale to an audience. We recently launched new user tools and rendering methods that for the first time make high-quality spatial audio possible for large-scale consumption. These rendering techniques were applied to a new, robust toolset, called Spatial Workstation, that gives creators the ability to place sound in a 360 video. The rendering was also applied to the Facebook apps, so people hear the same audio that creators upload.

Both of these advancements will enable video producers to recreate reality across a wide range of devices and platforms. In this post, we’ll explore the technical details behind what we’ve built — but first, a bit of history on spatial audio.

A quick primer on spatial audio

Hearing spatial audio over headphones is possible because of head-related transfer functions (HRTFs). HRTFs help construct audio filters that can be applied to an audio stream to make it sound like it is positioned at a specific location — above you, behind you, next to you, and so on. HRTFs are generally captured in anechoic chambers with a human subject or a model of the human head and torso, but there are other methods to generate them too.

Before people can hear the audio, a creator has to place the sound in the correct position. To put it another way, they have to design and then stream the spatial audio. There are a variety of methods that exist to do that. One approach is object-based spatial audio, a method in which the individual sounds for each object in the scene (e.g., a helicopter or an actor) are saved as discrete streams with positional metadata. Most games use an object-based system, as the positions of each audio stream might change depending on where the player moves.

Ambisonics is another method in spatial audio that describes a whole sound field. You can think of it as a 360 photograph for audio. A multichannel audio stream can be used to easily represent the whole sound field, which makes is easier to transcode and stream than object-based audio. An ambisonic stream can be represented through a variety of schemes. The biggest differentiator is the order of the ambisonic sound field. A first-order sound field results in four channels of audio data, while a third-order sound field results in 16 channels of audio data. Generally, a higher order results in better sound quality and more accurate positioning. You can think of a low-order ambisonic sound field as a blurry 360 photograph.

Workflow and tools

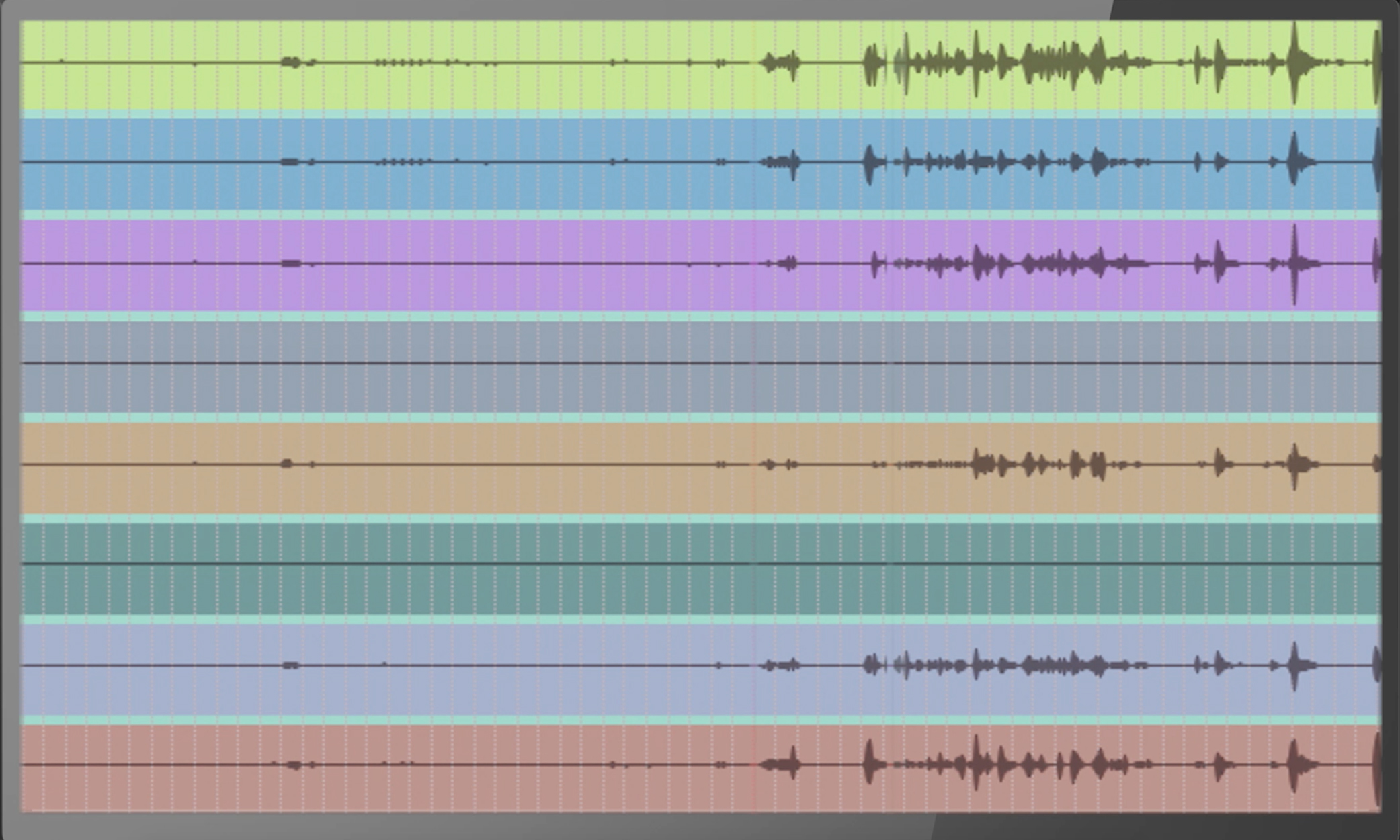

Spatial Workstation is a suite of tools we developed to help professional sound designers design spatial audio for 360 video and linear VR experiences. The Workstation extends the functionality of existing audio workstations to position sounds in 3D space with a 360 video and, simultaneously, to preview the output on a VR headset. This creates a high-quality end-to-end workflow, from content creation to publishing.

Traditional stereo audio includes two channels. The system we developed with the Spatial Workstation outputs eight channels instead. We call this a hybrid higher-order ambisonic system, as the sound field has been modified to benefit VR and 360 experiences. It is tuned to our spatial audio renderer to maximize sound quality and positional accuracy while keeping the performance requirements and latency to a minimum. Additionally, the Spatial Workstation can also output two channels of head-locked audio. This is a stereo stream that does not respond to head tracking and rotation and always stays “locked” to the head. Most 360 experiences use a mix of spatialized and head-locked audio, while the spatialized audio might be used for action happening within the 360 scene and the head-locked audio might be used for narration or background music.

Rendering

Our spatial audio renderer includes a suite of technologies we’ve developed over many years that make it easy to scale to a range of device types and configurations while still keeping the quality optimal. The renderer uses HRTFs that are parameterized and represented in a way that various components of the HRTFs can be scaled to either improve performance or quality, or help find a good middle ground. The audio latency of our renderer is less than half a millisecond, which is an order of magnitude lower than most renderers, making it more than ideal for real-time experiences such as head-tracked videos.

This flexibility is helpful when targeting a range of desktop computers, mobile devices, and browsers. The renderer is tuned to sound the same and perform well across each of these target systems. This consistency matters when creating spatial audio experiences. It would be detrimental to the ecosystem if the audio were rendered to sound different on each platform or device because of performance requirements. We want to ensure consistent quality across a huge range of devices and ecosystems at Facebook’s scale.

Cross-platform

The renderer is part of the Audio360 audio engine, which can spatialize hybrid higher-order ambisonic and head-locked streams. The audio engine is written in C++ with optimized vector instructions for each platform. It is lightweight and manages queueing, spatialization, and mixing through a multithreaded and lock-free system. It also directly communicates with the audio systems on each platform (openSL on Android, CoreAudio on iOS/macOS, WASAPI on Windows) to minimize output latency and maximize performance. The lightweight design not only keeps things fast but also reduces app bloat by keeping binary size small. The audio engine binary is compiled to about 100 kilobytes.

For the web, the audio engine is compiled to asm.js using Emscripten. This helps us maintain, optimize, and use the same codebase across all platforms. The code required little modification to work well in the browser. The flexibility and speed of the renderer allows us to use the same technology in the browser and maintain quality. The audio engine in this case is used as a custom processor node within WebAudio, where the audio stream is queued into the audio engine from the Facebook video player and the spatialized audio from the audio engine is passed to WebAudio for playback through the browser. Compared with the native C++ implementation, the JS version runs only between 2x and 4x slower, which is still more than adequate for real-time processing.

The flexibility and cross-platform nature of the renderer and audio engine allows us to continually improve the quality as devices and browsers get faster with every passing year.

From encoding to client delivery

The world of encoding and file formats for spatial audio is rapidly evolving and in a state of flux. We wanted to make it as easy as possible to encode and upload content made with the Spatial Workstation to Facebook, for viewing and listening on all devices that people use. The Spatial Workstation Encoder will prepare the eight-channel spatial audio and stereo head-locked audio, together with a 360 video, into one file ready to upload to Facebook.

Encoding

There were some challenges in finding a file format that would work. We had several constraints, some of which we can loosen with time but which we needed to work around to deliver an encoder sooner rather than later. The primary constraint was that to minimize quality loss in transcoding we needed to stick to Facebook’s native video format: MP4 with H.264 video and AAC audio. This implies the following practical constraints:

- AAC in MP4 supports eight channels, but not 10 channels.

- AAC encoders understand eight-channel audio to be in the 7.1 surround format, which applies an aggressive low-pass filter and other techniques to compress the LFE channel. This is incompatible with faithful rendering of spatial audio.

- MP4 metadata, which is extensible but cumbersome to work with using tools like ffmpeg or MP4Box.

We chose to use a channel configuration with three audio tracks in the MP4 file. The first two are four-channel tracks with no LFE, for a total of eight non-LFE channels together. The third track is the stereo head-locked audio. We encode at a high bit rate to minimize quality loss when converting from WAV to AAC, because it will be transcoded again on the server to prepare for client delivery.

At Facebook, we have a core engineering value to move fast. We expect that we haven’t yet thought of all the information we might need to convey as our tools and capabilities evolve. For that reason, we wanted a metadata scheme that was forward-extensible and easy to work with. Defining our own MP4 box types felt brittle and slow, so instead we decided to put our metadata in an xml box inside a meta box. That XML could follow a schema that can evolve as quickly as we need it to. The MP4Box tool can be used to read and write this metadata from the MP4 file. We store metadata for each audio track (under the trak box), defining the channel layout for that track. Then we also write global metadata at the file level (under the moov box).

The Spatial Workstation Encoder also takes the video as input. This video is muxed into the resulting file without transcoding, and the appropriate video spatial metadata is written to the file so that it is processed as a 360 video when uploaded to Facebook.

YouTube currently has support for first-order ambisonics, which requires four channels. We also support videos prepared in this format.

Transcoding

Once the video with 360 video and 360 audio is uploaded, it is prepared for delivery to the various client devices. The audio is similarly processed in several formats. We extract the audio metadata (whether YouTube ambiX or Facebook 360 format) to determine the track and channel mapping, and then we transcode it into the various formats. As with all our videos, sometimes we transcode with multiple encoder settings so we can do comparisons to arrive at the best possible overall listener experience. We also prepare a stereo binaural rendering that is compatible with all legacy clients and also serves as a fallback if anything goes wrong.

The audio is prepared separately from the video, and combined to be delivered to clients through the use of adaptive streaming protocols.

Client delivery

Different clients have different capabilities and support different video container/codec formats. There isn’t yet a single format that all our devices support, so we prepare a different format for iOS, Android, and web browser. We control the audio-visual player on all these devices, so we are able to implement special behavior as needed, but we prefer extant code that is well-tested and doesn’t require extra time to implement. For that reason, on iOS we prefer MP4 as the container, and on Android and web browsers we prefer WebM. On both iOS and Android, decoding 10 channels of AAC audio is not natively supported or hardware accelerated, unlike monoaural or stereo tracks. This, together with the aforementioned problems with AAC and eight or 10-channel audio, led us to look at different codecs. Opus is being used by others for spatial audio, and there is ongoing work to make compression better using the Opus codec. It is a modern and open codec, and it decodes faster in software than AAC. This made Opus a natural choice, especially for the WebM container. Opus is not currently supported in MP4 by most encoders or decoders. However, there is a draft proposal for Opus in MP4 and a work in progress to support it in ffmpeg.

We had a few challenges transcoding the three-track audio format in the uploaded file (“4+4+2”) into a single 10-channel Opus track. Like with AAC, the allowed channel mappings and LFE channel were a problem. However, Opus allows for an undefined channel mapping family (family 255), which means that the channels are not in a known layout. This works well enough because we control both the encoding and decoding, and we can ensure that the layout is understood the same on both ends. We transmit channel layout information in the streaming manifest. In the future, as spatial audio within Opus matures, there may be specific channel mappings and enhanced encoding techniques to reduce file size and/or increase quality. Our clients will be able to decode them with minimal or no change.

Future directions

We are in an expanding and evolving field with spatial audio — the formats we’ve taken for granted for non-spatial video and audio are now changing and improving. The work we’ve done is a great beginning in bringing these experiences to life, but there’s more to do. Components up and down the stack — from the Workstation to the video file format — need to change. Currently, we are working toward supporting an upload file format that can store all audio in one track, and potentially use a lossless encoding. But we’re also interested in the work to improve compression for spatial audio in Opus. We are interested in exploring adaptive bitrate and perhaps adaptive channel layout to improve the experience for people with limited bandwidth, or enough bandwidth to receive even higher quality. It’s an exciting space to be in, and we’re looking forward to contributing more to this ecosystem.

For an example of the new spatial audio done in the Facebook 360 Spatial Workstation, check out this 360 video from the JauntVR page.